Most organizations run on a foundation of invisible code. Up to 90% of their software architecture consists of third-party libraries they have neither checked nor mapped, which creates significant software supply chain vulnerabilities. This heavy reliance on pre-built parts has changed software development from writing original logic into an industrial process of assembly. While this shift helps teams ship products faster, it creates a blind spot; the security of your application now depends on the choices of thousands of external contributors.

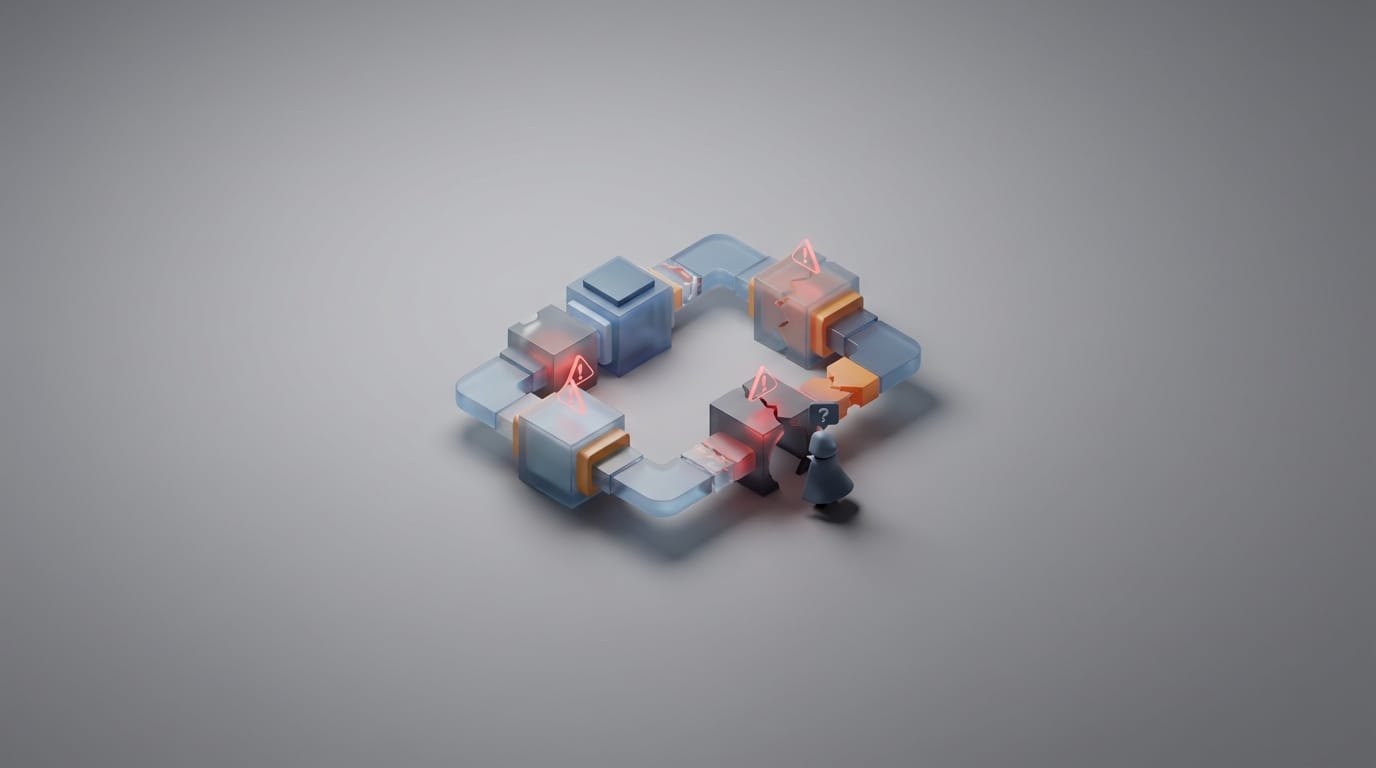

Risks often hide in the code your code relies on. These nested dependencies form a multi-layered black box that standard scanners often fail to see, leaving security teams to defend a hollow perimeter. When someone compromises a single low-level utility library, the effects ripple through the global network. These attacks bypass traditional firewalls because they arrive through signed, trusted software updates that your system already accepts as safe.

Managing these systems requires more than just installing patches. It demands a close look at how code is born, built, and delivered. By studying the mechanics of the software supply chain, we can find where invisible risks hide. This allows teams to build a zero-trust architecture that treats every third-party component as a potential entry point for an attacker.

The Reality of Software Supply Chain Vulnerabilities

Modern teams rarely write software from scratch. Instead, they assemble it from a vast network of open-source parts, frameworks, and APIs. Recent studies show that most applications now use open-source code, with these elements making up nearly 90% of the final product. This shift helps small teams build complex systems, but it also moves security responsibility to a global chain of volunteers.

The scope of this chain goes far beyond the libraries themselves. It includes source code repositories, build tools, CI/CD pipelines, and distribution channels. Each part of this chain relies on inherited trust. If a developer uses a popular container image, they inherit the security habits of whoever made that image. If an attacker takes over that person’s account, they can inject a flaw into your environment without ever triggering an alert.

This reality forces us to think carefully about using open-source alternatives for data protection. While open source offers transparency, that transparency only works if someone actually reads the code. Without active checks, organizations are essentially outsourcing their critical systems to people who may not have the time or money to keep things secure.

The Transitive Dependency Crisis

The most common software supply chain vulnerabilities do not exist in the files a developer directly adds to a project. Instead, they hide in transitive dependencies several layers deep. When a developer adds one package, that package might pull in twenty others, which then pull in another hundred. This creates a tree structure where most of the code running in your system was never explicitly requested by your team.

These hidden parts create a visibility gap. Standard security tools often look at top-level files but miss flaws deep in the branches of the tree. For instance, a simple tool for drawing charts might rely on a small, obscure script for cleaning text. If that small script has a flaw, every application using the chart tool becomes an easy target. It does not matter how strong the chart tool itself is if the foundation beneath it is weak.

Code rot makes this worse because developers often rely on old packages that no longer get security updates. Data suggests that popular open-source packages contain dozens of flaws because maintainers focus on new features rather than fixing old bugs. This creates a black box that requires deep scanning and constant monitoring to keep safe.

How Attackers Exploit the Pipeline

Attackers now target the tools and storage areas that developers trust. One common trick is dependency confusion. An attacker uploads a harmful package to a public site using the same name as a private library used inside a company. Many build tools are set up to pick the version with the highest number. This causes the server to pull the harmful public file instead of the safe internal one.

Typosquatting is another constant threat. By giving a harmful package a name that looks like a popular one, such as request-promis instead of request-promise, attackers catch people making small typing errors. Even though many sites now use better login security, attackers still win by tricking people or taking over the accounts of trusted contributors. Once they control an account, they can push a signed update that contains a backdoor.

The build environment is also a high-value target. If an attacker gets into a build server, they can change the software as it is being made without touching the source code. This is hard to detect because the final file still has the company’s official digital signature. Learning verifying digital requests to prevent phishing is vital for the engineers who manage these automated systems.

Why Traditional Perimeter Security Fails

Firewalls and standard security software are not built to stop software supply chain vulnerabilities. These tools look for known bad behavior or suspicious files. Supply chain attacks are different because they involve good code doing bad things. Since the harmful logic lives inside a trusted application, it runs with full permission. The system assumes the code is safe because it comes from a known source.

The idea that signed code is always safe is a dangerous mistake. A digital signature only proves where the code came from and that it has not changed since it was signed. It does not prove the author was honest or that their computer was clean. If an attacker poisons the build server, that server will sign the harmful software. This gives the attack a stamp of approval that lets it pass through security checks into production.

Analysis tools also struggle with the massive size of modern software. A tool might find a flaw in a direct package but fail to see how it affects the rest of the system. This is why implementing zero trust architecture is so important. It moves the focus from defending a border to checking every single interaction, no matter where the code originated.

Transparency Through Software Bill of Materials

To stop hidden risks, organizations need a complete list of everything in their software. This is called a Software Bill of Materials (SBOM). An SBOM acts like a list of ingredients for a digital product. It tells security teams exactly which parts and versions are inside their applications. If a new flaw is discovered, the team can quickly check the SBOM to see if they are at risk.

Having a list is only the first step. The amount of data can overwhelm teams and lead to alert fatigue. To solve this, many use Vulnerability Exploitability eXchange (VEX) files. These documents help software makers explain if a flaw actually poses a threat in their specific product. For example, a library might have a bug, but if the application does not use the part of the library with the bug, the VEX record marks it as not affected. This lets teams focus on real threats.

Automating this process closes the gap between developers and security experts. By making SBOM and VEX checks part of the daily work, organizations keep a live map of their software. This transparency ensures that how software updates protect systems works as intended, rather than creating new ways for hackers to get in.

Hardening the Build and Delivery Pipeline

Protecting the chain requires a move to a verify then trust model. The Supply-chain Levels for Software Artifacts (SLSA) framework helps with this change. SLSA provides steps to improve security, from basic automation to full build integrity with multiple approvals. By reaching higher levels of this framework, organizations make sure their build process is isolated and produces proof that no one messed with it.

Applying zero-trust rules to the development cycle involves a few key steps:

- Hermetic Builds: Keep the build environment off the internet and use only pre-checked, local copies of files to prevent last-minute poisoning.

- Multi-Party Approval: Require at least two people to sign off on changes to important scripts or settings.

- Temporary Environments: Use build servers that are deleted after every job to stop malware from sticking around.

- Digital Proof: Create a record of how software was built that the system can check before the code is allowed to run.

Treating the build pipeline like a critical system helps stop signed malware. These controls ensure that even if an attacker takes over a single account, they cannot reach the final product. The goal is to make the supply chain as strong as the code it produces by turning the black box into a clear, verifiable machine.

Closing the Visibility Gap

The move from writing code to assembling systems has changed the way we think about safety. Most software supply chain vulnerabilities hide where traditional defenses cannot see. We can no longer treat third-party libraries as safe by default. Instead, we must see them as moving parts of a complex world that needs constant checking. This crisis is a structural reality of modern engineering that requires clear, machine-readable lists and hardened build paths.

The organizations that succeed will be those that treat their supply chain with the same care they give their own code. By using tools like SBOMs and frameworks like SLSA, security leaders can finally look inside the black box. They can stop asking if their code is secure and start asking if they truly know what code they are running. Reclaiming control over your architecture is the only way to stay safe in a world of invisible code.